Software Performance Tuning is the disciplined craft of making software faster, more responsive, and more efficient under load. By combining software performance profiling, application performance optimization, and targeted debugging performance issues, teams can deliver snappier experiences and scalable systems. Effective tuning relies on benchmarking and a systematic workflow that starts with measuring real workloads and ends with measurable improvements. Key techniques include CPU and memory profiling to locate hot paths, plus leveraging performance tuning tools to validate changes without overhauling code. A solid approach aligns engineering work with user expectations, performance budgets, and business goals to ensure speed translates into happier users.

This discipline can also be framed as performance engineering, where understanding how code behaves under load guides improvements. Rather than chasing a single metric, teams map bottlenecks through profiling data and architectural tweaks to lower latency and boost throughput. In practice, terms such as system tuning, resource management, and runtime optimization describe the same goals from different angles. Adopting this LSI-inspired language helps stakeholders connect day-to-day engineering work with reliability, scalability, and a better user experience.

Software Performance Tuning: Profiling, Optimizing, and Scaling for Fast, Responsive Apps

Software Performance Tuning is the deliberate practice of making software faster, more responsive, and more resource-efficient across web services, mobile apps, and data pipelines. By combining software performance profiling, targeted optimization, and careful debugging, teams can deliver snappier experiences, better scalability under load, and more predictable resource use (CPU, memory, I/O). In an era of instant-user expectations and continuous deployment, this discipline is a competitive necessity, delivering measurable, maintainable improvements that endure under real-world usage patterns.

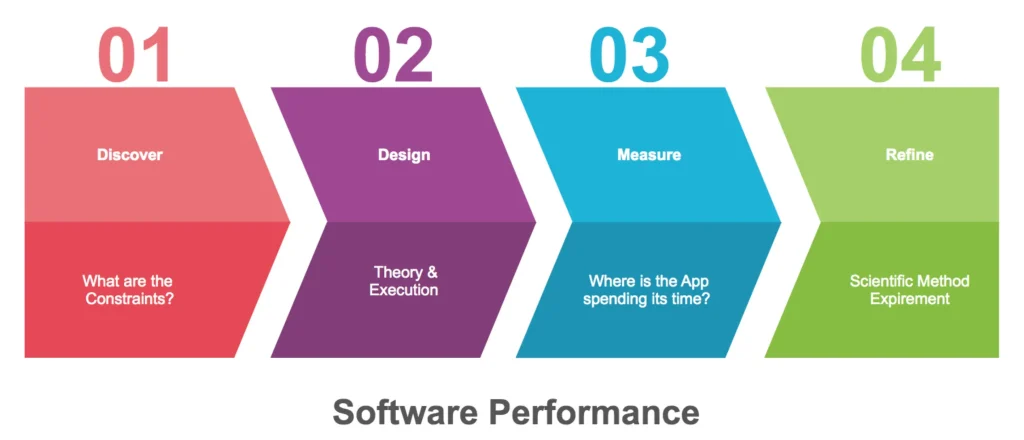

A practical workflow begins with profiling to identify hotspots, followed by data-driven prioritization and incremental changes that are easy to verify. Start with sampling to locate hotspots quickly, then move to instrumentation for deeper visibility. Use a small set of performance tuning tools across your stack—Linux perf, Instruments or DTrace, Java Flight Recorder or Py-Spy—to build a baseline, track a representative workload, and define target improvements. Emphasize CPU and memory profiling to focus attention on hot paths and memory pressure.

Profiling, Debugging, and Continuous Improvement: Turning Data into Reliable Performance

Profiling yields the data that powers debugging performance issues, helping teams separate symptoms from root causes and guiding application performance optimization. Document hotspots, validate assumptions with re-profiling, and map improvements to concrete metrics such as latency, throughput, and resource usage. The goal is not to fix every function, but to relieve the highest-impact bottlenecks identified in the profiling data and to establish a reusable debugging workflow.

A disciplined debugging workflow includes reliable reproduction, targeted instrumentation, and careful analysis of memory behavior, thread contention, and I/O paths. Collect heap dumps, thread dumps, and GC logs to understand memory pressure and concurrency, inspect database queries and indexing for data access inefficiencies, and verify external service latency. Apply incremental fixes, re-profile to confirm the issue is resolved, and maintain automated performance tests to guard against regressions—hallmarks of a robust performance tuning tools workflow and ongoing maintenance.

Frequently Asked Questions

What is Software Performance Tuning and how does software performance profiling drive application performance optimization?

Software Performance Tuning is the disciplined art of improving the speed, responsiveness, and resource efficiency of software systems. It begins with software performance profiling to identify real hotspots under representative workloads; CPU and memory profiling quantify where time and allocations occur, guiding targeted application performance optimization. Using a focused set of performance tuning tools, you implement measurable changes that reduce latency and resource use, and you re-profile to confirm the improvements endure.

What practical steps make up a workflow for debugging performance issues in Software Performance Tuning?

A practical workflow starts by reliably reproducing the problem in staging, then using performance tuning tools to collect CPU, memory, and I/O data. Analyze the profiles to pinpoint root causes such as memory leaks, GC pressure, thread contention, or slow external calls, and form a hypothesis about the bottlenecks. Implement small, verifiable optimizations (e.g., algorithmic changes, caching, data-structure tuning, or query optimizations) and re-profile after each change to verify impact. Finally, automate benchmarking to track progress against a baseline and document decisions to enable ongoing debugging performance issues and continuous optimization.

| Topic | Key Points | Notes/Examples |

|---|---|---|

| What is Software Performance Tuning? | Improves speed, responsiveness, and resource efficiency; aims for measurable, maintainable gains; applies across web services, mobile apps, embedded systems, and data processing pipelines. | Not just micro-optimizations; focuses on real-world impact. |

| Profiling | Collect data about time spent and resource allocation; identify hotspots; distinguish myths from facts; baseline with representative workload. | Key metrics: CPU time/usage, function execution time, memory allocations, I/O wait, cache misses. |

| Profiling Methods | Sampling vs instrumentation; pragmatic approach: start with sampling to identify hotspots, then instrument for deeper investigation. | Tools vary across ecosystems (perf, Instruments, WPR/WPA, DTrace, Java Flight Recorder, cProfile, Py-Spy, etc.). |

| From Profiling to Optimization | Validate hotspot, prioritize changes, implement changes incrementally, re-profile after each change, document decisions. | Optimization categories: algorithmic improvements, data structures, caching, parallelism, I/O, memory management, platform tuning. |

| Debugging Performance Issues | Diagnose root causes (memory leaks, GC pressure, thread contention, slow I/O); reproduce reliably; collect heap/thread dumps; analyze queries and dependencies. | Repeatable reproduction and targeted instrumentation are essential. |

| Case Study: REST API | Baseline → hotspot identification → targeted optimizations; refactor to reduce complexity; add cache; optimize queries. | P95 latency reductions illustrate impact of profiling and optimization. |

| Best Practices | Profile early and often; define metrics/SLOs; automate benchmarking; separate concerns; careful instrumentation; focus on real workloads; document decisions; balance stability and speed; foster a performance culture. | Focus on durable improvements that align with business goals. |

| Tools and Practical Advice | Use a focused set of profiling tools for CPU, memory, I/O, concurrency, and databases; build repeatable workflows; tie improvements to business outcomes. | Chose the right toolset and workflow over chasing every new tool. |

Summary

Software Performance Tuning is a continuous journey of profiling, optimizing, and debugging to deliver faster, more scalable software. In practice, teams begin with baseline profiling to locate real hotspots, implement targeted optimizations, and validate improvements against representative workloads. By integrating profiling into development and deployment pipelines, defining clear metrics, and cultivating a data-driven culture, organizations can reduce latency, increase throughput, and improve user experience. The cycle of profile, optimize, validate, and repeat helps ensure resilience under load and readiness for tomorrow’s challenges.